Benchmark AI models

on your own data

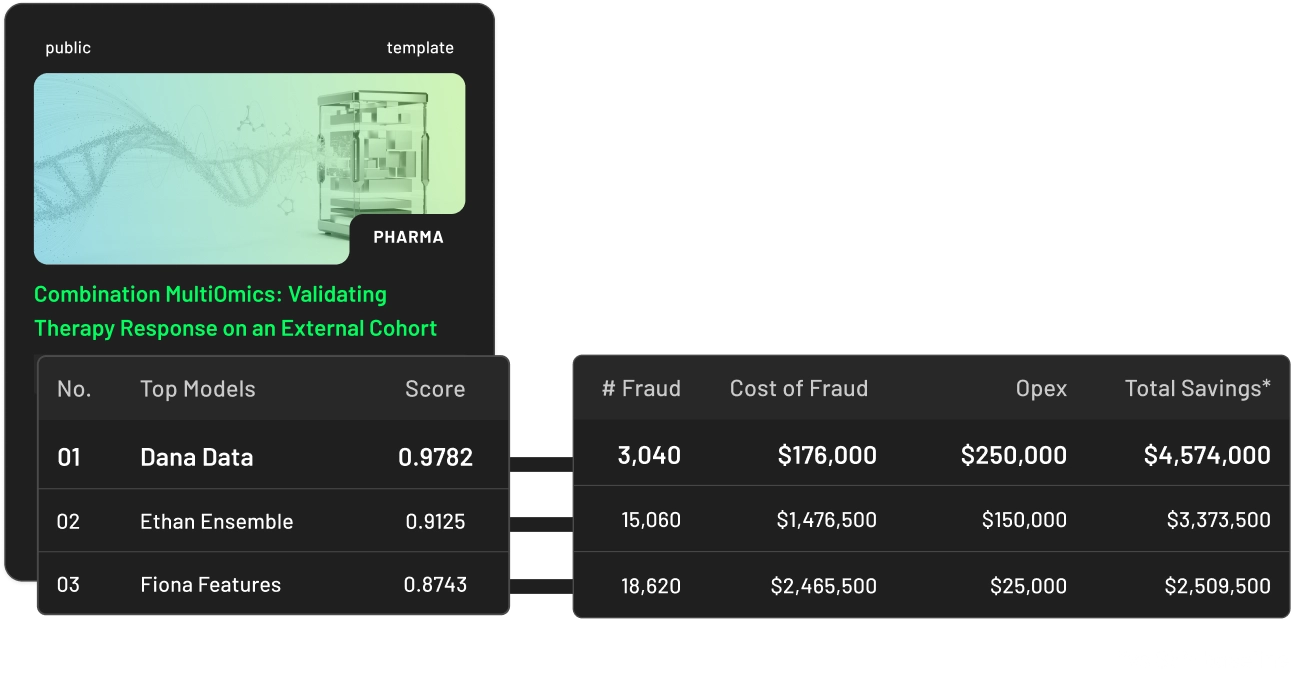

Test AI models from anyone — your team, vendors, research partners — directly on your infrastructure, against your training and test data. Nothing is ever exposed.

how tracebloc works

trusted by

Any model, any framework, running in your infrastructure

See how every model ranks on your task

Your data never leaves

your infrastructure

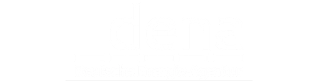

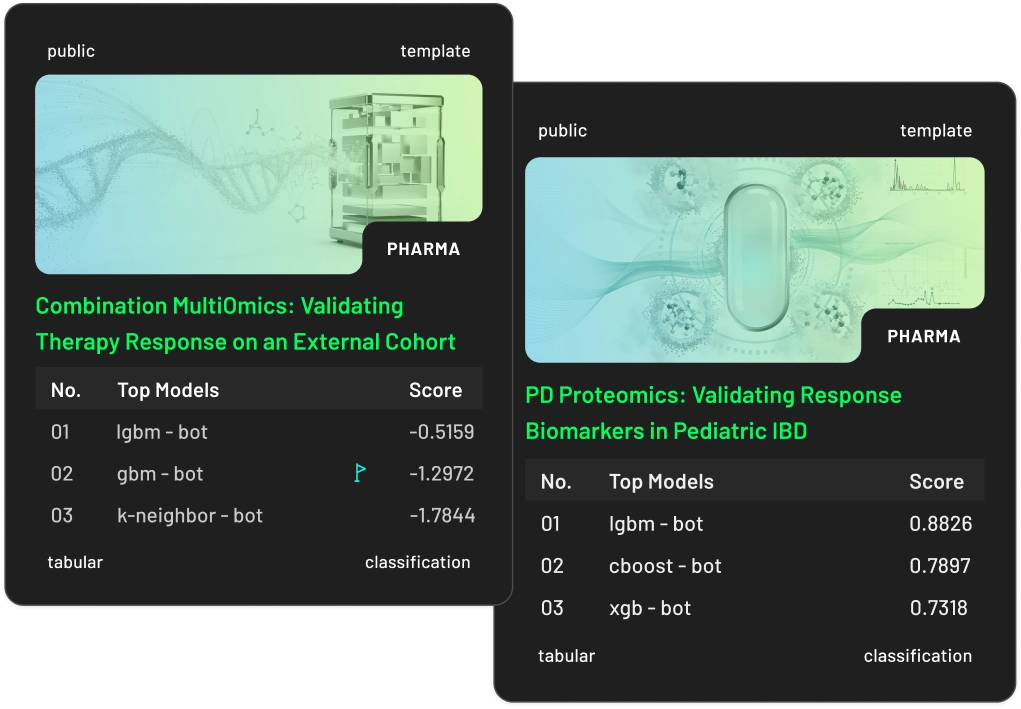

WHAT TEAMS BUILD

Active use cases on private data

Setup

Set up your first use case, onboard vendors

Deploy tracebloc in your environment

Install on any cloud, any on-prem, or any Kubernetes cluster. Runs on Docker. Setup in about 30 minutes.

Ingest your training and test data

Your data stays on your infrastructure. tracebloc sees only metadata, never raw records. Bring any format — structured, unstructured, or mixed.

Define the task and metrics

Pick the task. Pick what counts — accuracy, latency, cost, or a custom metric. One config, applied consistently across every submission.

Invite contributors to submit models

Your team, vendors, or research partners can each submit models — open-weight, fine-tuned, or proprietary. Every submission runs in its own isolated container.

Every model runs in parallel

Contributors train and benchmark inside identical containers on your compute. Same data, same pipeline, same metrics — no manual coordination needed.

See the leaderboard

Ranked by accuracy, latency, and cost per request. Exportable. Share with your team, your paper, or your procurement process.

1

2

3

# Installs everything. Live in minutes 🤟

$ bash <(curl -fsSL tracebloc.io/i)

1

2

# Installs everything. Live in minutes 🤟

irm tracebloc.io/i.ps1 | iex

FAQs

Answers to common questions

The best tools for evaluating AI models in 2025 run benchmarks on your data, not public test datasets. Many frameworks are built for evaluating large language models or generative AI outputs you already own, often within the LangChain ecosystem or CI/CD integration pipelines. tracebloc solves a different problem: comparing models from multiple external vendors on your private data, side by side, without your data ever leaving your infrastructure.

Teams evaluating AI model quality without dedicated internal tooling often rely on vendor-provided benchmarks, which may not reflect performance on their specific use case or production data. There's no test sets built from your actual production distribution. tracebloc gives you a structured model evaluation workspace you deploy on your own infrastructure in minutes, without building or maintaining the tooling yourself.

Most teams default to one of three approaches: trust the vendor's benchmark numbers, run a small pilot on sanitized data, or hire a consultant. None of these reflect how the model performs on your actual production data at scale — and none of them surface the edge cases that break AI systems in deployment.

The more practical approach is a ready-made evaluation workspace that lets vendors submit models directly, runs automated evaluations against your real data in isolated containers, and surfaces results on a shared leaderboard. You get structured evaluation across multi-step workflows without building or maintaining the tooling yourself. tracebloc is built exactly for this — the workspace deploys in minutes, vendors onboard via email whitelisting, and your data never moves.

The right AI model performance evaluation metrics depend on your use case and what your AI application actually needs to do in production. tracebloc supports accuracy, F1 score, latency, robustness, memory usage, compute cost, and carbon footprint (gCO₂e) — all configurable per use case with custom evaluators, so you measure what matters for your environment, not what looks good on a generic leaderboard.

Yes. tracebloc runs all vendor models in identical containerized environments — same data, same compute, same metrics — so every submission is evaluated side by side under fair conditions.

Every result appears on a shared leaderboard, making it one of the more practical AI model evaluation tools for teams managing multiple vendors or running competitive tenders. No spreadsheets. No trust issues. No guessing which test cases each vendor optimized for. Just results.

Stay in the loop

Get updates on new templates, model releases worth testing, and community benchmarks. No spam, unsubscribe anytime