AI Vendor Evaluation: You Decided to Buy AI. Now What?

Most AI vendor evaluations fail. Discover how to benchmark vendors on your data, measure real performance, and avoid costly mistakes.

Lukas Wuttke

Mar 10, 2026

15 min

You've had the build-or-buy conversation. You decided to buy. Maybe your data science team is stretched. Maybe the use case is too critical to spend eighteen months building from scratch. Maybe you looked at what's available in the market and concluded that a specialised vendor should outperform what you can build internally.

Good. That's the right call for a lot of organisations. But it's also where the real problem starts — because the way most enterprises evaluate AI vendors is fundamentally broken, and almost nobody talks about why.

The one thing you need to get right

Here's the core of it: the only number that matters when choosing an AI vendor is how their model performs on your data.

Not on their demo data. Not on the benchmark in their proposal. Not on a case study from a different company in a vaguely similar industry. On your data, against your existing baseline, measured on your success metrics.

This sounds obvious. Almost no one does it.

Why? Because it's structurally very difficult. And that structural difficulty is what makes the entire vendor evaluation process unreliable. But before we get into the why, it's worth understanding what's at stake when model performance isn't measured properly — because the consequences are not what most people expect.

What getting model performance wrong actually costs

A global payments provider in Frankfurt — 5 billion transactions per year — needed to improve its fraud detection. The internal system combined rules with LightGBM models and worked adequately on normal patterns, but it was failing on edge cases: low-value micro-frauds, synthetic identities, cross-border patterns. The Head of Fraud Analytics evaluated three external AI vendors.

The requirements were non-negotiable: sub-40ms latency per transaction, ≥99.5% recall on known fraud patterns, false positive rate at or below 1%, on-premises deployment, and explainable outputs for regulatory traceability. In fraud detection, these constraints aren't aspirational — they're production hard limits. A model that misses any one of them can't be deployed, regardless of how impressive its accuracy looks.

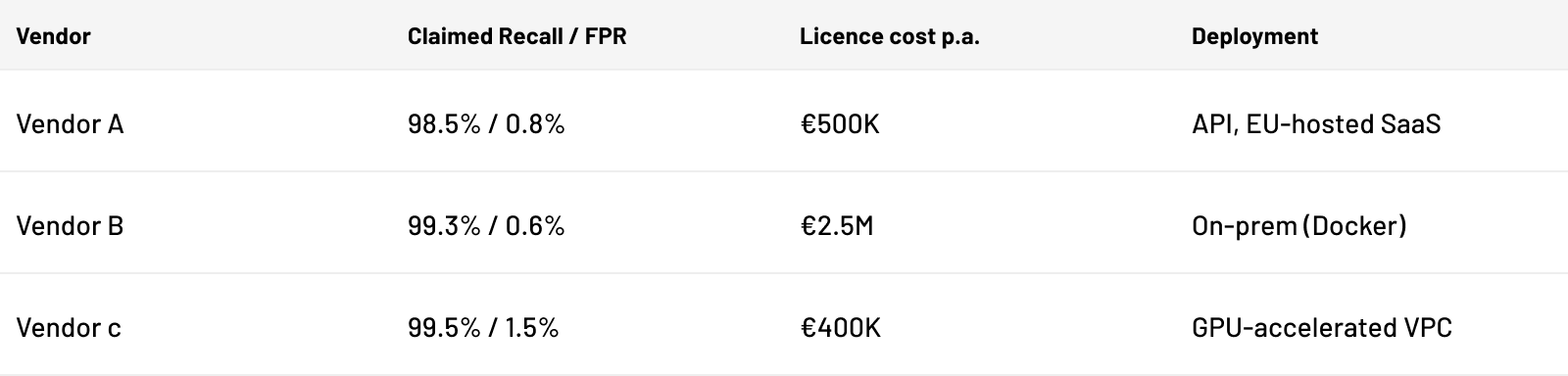

Here's what the vendors claimed:

On paper, Vendor C looks attractive — highest recall, lowest cost. Vendor B looks overpriced. Based on proposals alone, most procurement teams would shortlist C and question whether B is worth 6x the price.

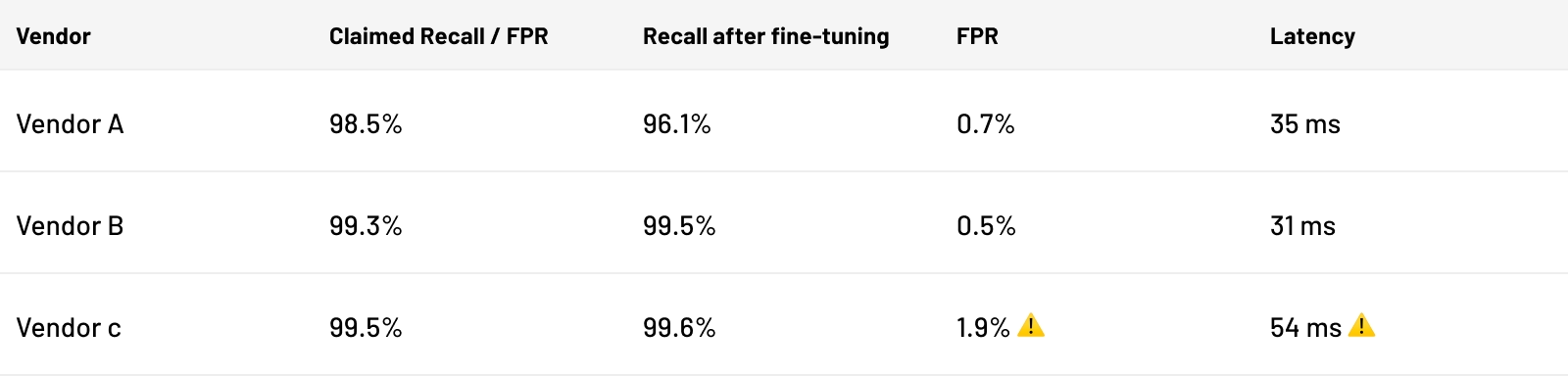

All three vendors were evaluated in parallel inside a secure environment on the company's own infrastructure. Vendors fine-tuned their models on synthesised transaction data without ever accessing raw records. Here's what actually happened:

Vendor C achieved the highest recall. It also failed on two hard constraints. Its 1.9% false positive rate — nearly double the 1% limit — would generate 95 million unnecessary friction events annually across 5 billion transactions. Its 54ms latency breached the real-time scoring requirement. High accuracy, unusable model.

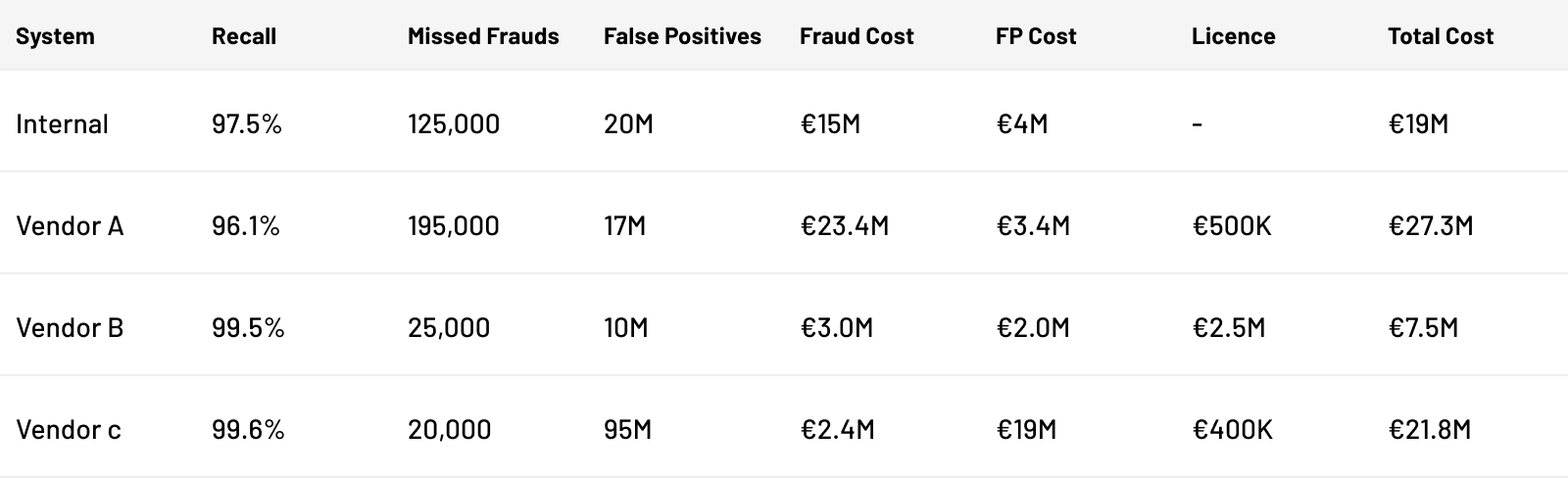

But the real lesson is in the business case. When you model the total cost — missed fraud at €120 per incident, false positives at €0.20 per event — the picture inverts completely:

Vendor A — the mid-priced option — is actually worse than doing nothing. Its lower recall means more missed fraud, and the total cost exceeds the internal system by €8.3M. Vendor C's €400K licence generates €19M in false positive costs alone — nearly 50x its price tag. And Vendor B — the one that looked overpriced at €2.5M — reduces total cost from €19M to €7.5M, saving €11.5M per year.

This is what model performance means in practice. Not a number in a vendor proposal. A direct line to your bottom line — where small differences in the right metrics compound into millions, and where the relationship between licence cost and total cost is routinely inverted. If you don't get model evaluation right, nothing else in the vendor selection process matters. And the more precisely you can measure it, the more value you capture.

Why this is so hard

AI models are not software in the way most enterprise buyers are used to thinking about software. You can't evaluate an AI model by reading documentation, checking a feature list, or running a demo. A model's value is entirely a function of how it performs on data it hasn't seen before — specifically, your data.

And this is the problem: you can't share your data.

If you're a bank, your customer financial data is regulated under GDPR, GLBA, and sector-specific rules. If you're a hospital, HIPAA governs every patient record. If you're a retailer, your transactional and behavioural data is a competitive asset you'd never hand to an external party. Even in manufacturing or logistics, operational data is proprietary intelligence.

53% of senior IT leaders in a recent survey cited data privacy as their single biggest barrier to AI adoption — above cost, above integration complexity. And this isn't irrational caution. GDPR penalties run up to 4% of global annual revenue. The risk is real.

So you're stuck. You need to test the model on your data to know if it works. You can't give your data to the vendor. And this tension — between the need for real evaluation and the impossibility of data sharing — is what breaks the entire process.

What happens instead

Because rigorous evaluation is so difficult, organisations default to proxies. They issue an RFP. They evaluate proposals — methodology documents, credentials, reference cases. They pick a vendor based on who presented best. Then they spend months on legal review, NDA negotiation, security assessment, and compliance onboarding just to get that one vendor to the point where a proof of concept can start.

The POC itself takes another 4–8 weeks and costs €20,000–€150,000. At the end of it, there's no guarantee the vendor's model beats your internal baseline. In practice, this is a common outcome — organisations invest six figures and a year of calendar time only to discover their current system is better.

And they've only tested one vendor.

A European retailer went through exactly this process for dynamic pricing. Over a year. Roughly €1 million invested. The consultancy they selected — a major name, chosen on the strength of their proposal — delivered a model that didn't outperform the retailer's existing system. They'll never know whether a different vendor might have solved the problem, because the process only had room for one bet.

This is the default. Not the exception.

What good evaluation actually requires

Strip away the process failures, and what an enterprise actually needs is straightforward:

-

Test on your data.

There is no substitute. Vendor benchmarks are a starting point for conversation, not a basis for a decision. The model must run on your historical data, and its performance must be measured against your existing system. -

Evaluate multiple vendors at the same time.

Sequential POCs are the single biggest structural failure of current procurement. They're slow, expensive, and they don't produce comparisons — they produce isolated data points. You need vendors benchmarked head-to-head, on the same dataset, with the same criteria, in the same evaluation window. -

Define your criteria before you engage anyone.

What are you measuring? What are the hard constraints? What does the model need to do in production — not just in accuracy, but in latency, explainability, and integration? Fix these before the first vendor is contacted. Otherwise every evaluation becomes a negotiation about what success means after the fact. -

Measure total cost, not licence cost.

The fraud detection case above shows this starkly — the cheapest licence was the most expensive decision by an order of magnitude. Evaluation must account for integration costs, ongoing support, retraining frequency, and most critically, the downstream business cost of model errors. A vendor that looks expensive on the line item may be the cheapest option when you model what their model performance actually saves or costs you. -

Require fine-tuning in your environment.

A vendor's off-the-shelf model is not the model that goes into production. What matters is how the model performs after it's been fine-tuned on your data. If a vendor can't fine-tune inside your infrastructure, you're evaluating a generic product, not a solution tailored to your problem. -

Test explainability before you sign.

In regulated industries — and increasingly in any enterprise context — a model that can't explain its decisions is a model you can't deploy. Don't take "we support SHAP" as an answer. Test it during evaluation. Make it a pass/fail gate.

The architectural solution

Everything above depends on solving the core paradox: you need to test on your data, but you can't share your data.

The answer is to flip the model. Instead of sending your data to the vendor, the vendor sends their model to you. The model is evaluated inside your infrastructure, on your data, and only the performance metrics leave your environment. Your data never moves.

This isn't theoretical. It's a practical application of privacy-preserving AI — and it's the architectural principle that makes real vendor evaluation possible in regulated enterprises.

tracebloc is built on this principle. It deploys inside your infrastructure — Azure, AWS, on-premises — and creates a controlled environment where multiple vendors submit their models in parallel. Each model is trained and benchmarked on the same held-out data, against the same criteria, and against your internal baseline. The result is an empirical ranking of which vendor actually performs best for your use case. Not a recommendation based on who presented well. A scoreboard based on who performed best.

The question to ask any platform — including this one — is simple: does it run on my data, inside my infrastructure, without my data leaving my environment? If the answer is no, the data privacy problem hasn't been solved. It's been deferred.

The shift

AI vendor evaluation has been treated as a procurement problem — something you manage through RFPs, legal review, and reference calls. It's not. It's a measurement problem. And until you can actually measure model performance on your data, in your environment, across multiple vendors at once, you're making a bet — not a decision.

The organisations that turn evaluation into a repeatable, evidence-based capability will compound that advantage over time. They'll deploy better models, replace underperforming vendors faster, and build genuine confidence in their AI investments.

Everyone else will keep choosing based on slide decks.

Answers to common questions

AI vendor evaluation is the process of assessing and comparing artificial intelligence vendors before a procurement decision. Most organisations still select AI tools based on proposals and presentations — a method that consistently rewards incumbents over genuinely better technology. The only evaluation that produces informed decisions is one where vendor models are tested on your data, against your existing baseline, using your success criteria. Everything else is a starting point for conversation, not a basis for selection.

A practical AI vendor evaluation guide comes down to three principles. First, define your AI vendor evaluation criteria before engaging anyone — performance metrics, hard constraints, and deployment requirements fixed in advance. Second, test in parallel: running sequential proofs of concept is too slow and too expensive to produce a real comparison. Third, model total cost rather than licence cost. AI platforms that look affordable on the line item can generate far higher cost when model errors are measured at production scale. The performance metric that matters is business outcome, not benchmark score.

Evaluating generative AI vendors for enterprise use requires security and compliance criteria alongside model performance. Data sovereignty is the first gate: does any prompt, document, or inference data leave your controlled infrastructure? For organisations under GDPR, the answer determines whether evaluation is legally permissible at all. Beyond that, assess access controls — whether the vendor's environment is isolated per client — and output risk management, since generative AI capabilities include the ability to produce incorrect or non-compliant outputs at scale. Criteria for evaluating vendors for enterprise generative AI should treat data protection as architectural, not contractual.

GenAI training vendors evaluation for large organisations requires a broader frame than single-use-case assessment. Large enterprises need AI platforms that support multiple AI implementations across business units, not a separate engagement per use case. Continuous monitoring at inference volume is essential — production AI technologies degrade as data distributions shift, and the monitoring infrastructure needs to scale with them. The most important long-term criterion is whether the vendor builds internal evaluation capability or sustains external dependency.

The criteria for evaluating AI training vendors go beyond model accuracy. Start with data handling: how does the vendor manage your proprietary data during training, and is it isolated so it cannot influence models built for other clients? Then assess fine-tuning performance specifically — the off-the-shelf model is not the production model, and the gap between baseline and fine-tuned results is where vendors differentiate. Training transparency matters in regulated industries: procurement teams increasingly need to know what data was used and what decisions were made. Finally, evaluate retraining cadence and cost, not just the initial engagement.

Criteria for evaluating gen AI training vendors share the fundamentals with general AI training assessment but carry additional weight on a few dimensions. Foundation model customisation quality — how well the vendor adapts a general-purpose model to your domain without losing breadth — is central. So is data protection during the fine-tuning process: generative models ingest large volumes of proprietary content, and the controls around that ingestion need to be explicit and auditable. Hallucination rate on your specific content type is a performance metric that generic benchmarks rarely surface but that production deployments depend on.

When you evaluate AI compliance vendors for accuracy, generic benchmark scores are the wrong starting point. Accuracy needs to be measured against your regulatory environment specifically — a model calibrated for one jurisdiction may perform differently under another framework. False negatives carry higher cost than false positives in most compliance contexts, so weight recall accordingly and test explicitly on the violation types most material to your risk profile. Explainability is not optional: compliance teams and regulators need to understand why a decision was flagged, and that reasoning needs to hold up under scrutiny. Audit trail completeness is a pass/fail criterion.

An AI vendor evaluation checklist for financial compliance should cover regulatory, technical, and commercial dimensions together. On the regulatory side: is a GDPR Article 28 DPA or sector-equivalent executed before any data access, and does the vendor's solution comply with applicable frameworks — GLBA, MiFID II, PRA rules? On the technical side: does evaluation occur inside your infrastructure, are data sources isolated per client, and are model outputs explainable in a regulator-acceptable format? Commercially: is the performance baseline contractual and tied to tested results rather than marketing claims? Total cost of ownership — including the downstream impact of model errors — must be modelled before sign-off.

Criteria for evaluating AI adoption roadmap vendors should focus on delivery credibility over strategic vision. Any vendor can produce a compelling roadmap document; the question is whether it reflects genuine understanding of your data infrastructure, governance constraints, and existing AI implementations. Look for case studies from organisations at a comparable stage of AI adoption — not just large enterprise logos. Require measurable milestones tied to performance outcomes, not activity outputs. The most valuable adoption roadmap vendors build your internal evaluation capability rather than positioning themselves as a permanent intermediary between your organisation and the AI market.

Criteria for evaluating AI process mining vendors start with a simple principle: process patterns are organisation-specific, so vendor benchmarks from other industries tell you very little. Evaluation needs to run on your own event logs and data sources. Beyond that, assess integration depth — pre-built connectors to your ERP and workflow systems versus custom development — and test on a representative sample of your messiest data, not a clean subset. The performance metric that matters is how much process improvement actually results, not analytical output quality in the abstract. Explainability of specific flagged variants is a practical requirement for operations teams to act on findings.

Criteria for evaluating AI talent vendors need to reflect the specificity of AI and ML hiring. Generic technology recruiting methodology does not translate well to roles requiring hands-on model development, evaluation infrastructure, or production ML pipeline experience. Assess the vendor's technical screening rigour directly — how they distinguish between candidates with AI familiarity and candidates who can build and evaluate production systems. If the platform uses AI-assisted candidate matching, understand what human oversight exists over those shortlists. Track record in comparable roles — data scientists, ML engineers, AI platform specialists — is more informative than overall placement volume.

Criteria for evaluating generative AI vendors with enterprise security requirements start with data sovereignty: does any prompt, document, or inference data leave your controlled infrastructure? For organisations under GDPR, this is a legal question before it is a technical one. Deployment architecture follows — on-premises or private VPC is the baseline requirement for most enterprise security policies, not a premium option. Access controls need to be verifiable at the client-environment level, not just asserted contractually. Output risk management is the final dimension: generative AI capabilities include producing incorrect or non-compliant content at scale, and the guardrails and monitoring around that need to be tested during evaluation, not assumed from documentation.

Key considerations for evaluating AI-powered fraud prevention vendors start with hard constraints, not accuracy headlines. Latency, false positive rate ceiling, and deployment architecture are pass/fail gates — a model that fails any one of them cannot go into production regardless of recall figures. Beyond that, total cost modelling is essential: at high transaction volumes, a vendor with a low licence cost and a 1.9% false positive rate can generate more cost than doing nothing. Adversarial test sets drawn from your own incident history reveal edge case performance that standard benchmarks miss entirely. Continuous monitoring in production is the final criterion — fraud tactics evolve faster than retraining cycles.

For AI compliance vendors, accuracy must be measured against your specific regulatory environment — not generic benchmarks. Explainability is a core performance metric, not an optional feature: compliance teams need to understand why the model flagged or cleared a decision, and regulators increasingly require a complete audit trail. For vendor evaluation criteria for AI security solutions, adversarial robustness matters more than benchmark accuracy. Security AI faces adversarial inputs by design. Evaluate false positive management carefully — alert fatigue from excessive false positives is itself a security risk. In both cases, continuous monitoring and clear update cadence are as important as initial model performance.

Criteria for evaluating agentic AI vendors on-premises centre on three things: whether the full agentic loop runs within your infrastructure with no external dependencies, whether access controls are scoped tightly enough to limit AI agent actions to defined boundaries, and whether human oversight mechanisms exist to intercept and override autonomous decisions. Auditability of every agent action is non-negotiable for regulated environments. For AI process mining vendors, the evaluation principle is the same — performance on your own data sources matters far more than benchmarks from other industries. Actionability of outputs is the relevant performance metric: not analytical quality in isolation, but how much process improvement actually results.

The core data security challenge in AI vendor evaluation is structural: valid model testing requires your data, but sharing it with external vendors creates regulatory and competitive exposure. The architectural solution is to invert the flow. Instead of sending data to vendors, vendors send their models to you. The model is trained and evaluated inside your infrastructure; only performance metrics leave your environment. Data sources never move. This approach aligns with GDPR, HIPAA, and sector-specific data protection requirements by design. It also enables parallel evaluation — multiple vendors assessed on the same held-out data with the same criteria simultaneously — which is how vendor performance evaluation solutions produce actionable business insights rather than isolated data points. The output is a scoreboard: which model performs best on your use case, at what total cost, with what risk profile. That is what build trust in AI procurement looks like in practice — evidence-based selection, not credential-based selection.

AI platforms built for ongoing vendor evaluation should support continuous monitoring of production models, enable repeatable benchmarking as new AI technologies enter the market, and maintain strict access controls over every vendor interaction. The ability to evaluate AI agents, foundation models, and specialised tools within the same infrastructure — without rebuilding compliance architecture for each new engagement — is what separates platforms that scale from those that serve a single procurement cycle. The organisations that treat AI vendor evaluation as a continuous capability, not a one-time event, are the ones that deploy better models faster and make consistently informed decisions about when to replace what they have.