19 Real-World Federated Learning Applications (2026)

Real-world federated learning applications across healthcare, finance, manufacturing, and more — with live examples from the tracebloc platform.

Lukas Wuttke

Mar 17, 2026

25 min

What Is Federated Learning? (Quick Overview)

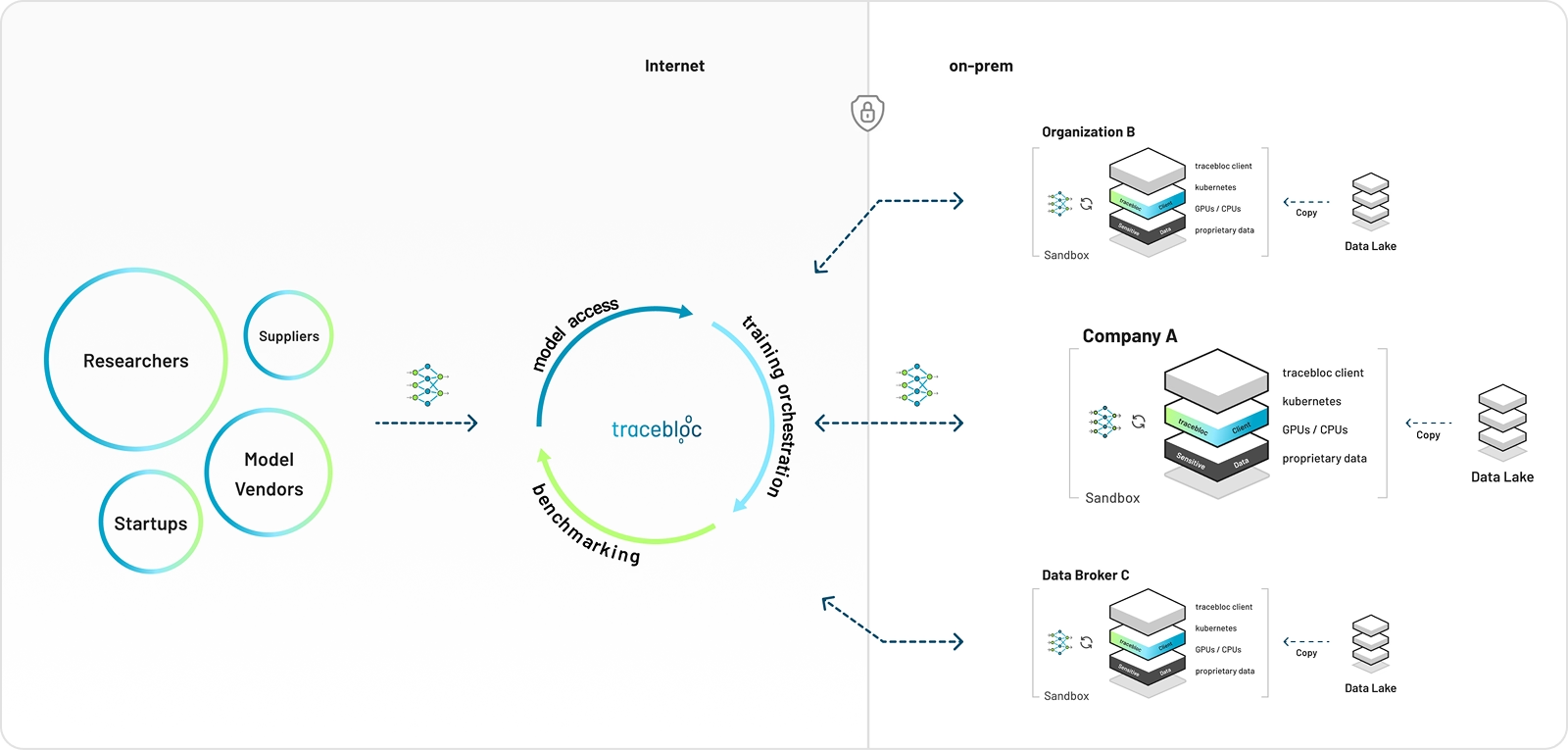

Federated learning (FL) has evolved from a research concept into a practical enterprise architecture for building AI without centralising data. Instead of moving datasets to a central server, a machine learning model is sent to where local data already lives. It trains locally on each participant's data and returns only encrypted model updates — never the raw records — to a shared global model.

This approach changes not only infrastructure but also governance, compliance, and cross-organisation collaboration. The result is a system where intelligence is shared but data is not.

Important: Federated learning is not the same as distributed training. It is a coordinated system combining orchestration, encryption, optimisation, and monitoring across participants who may span different organisations, jurisdictions, and infrastructure environments. With techniques like secure aggregation and differential privacy, participants collaborate without raw data ever being exposed to one another.

tracebloc's approach to federated learning

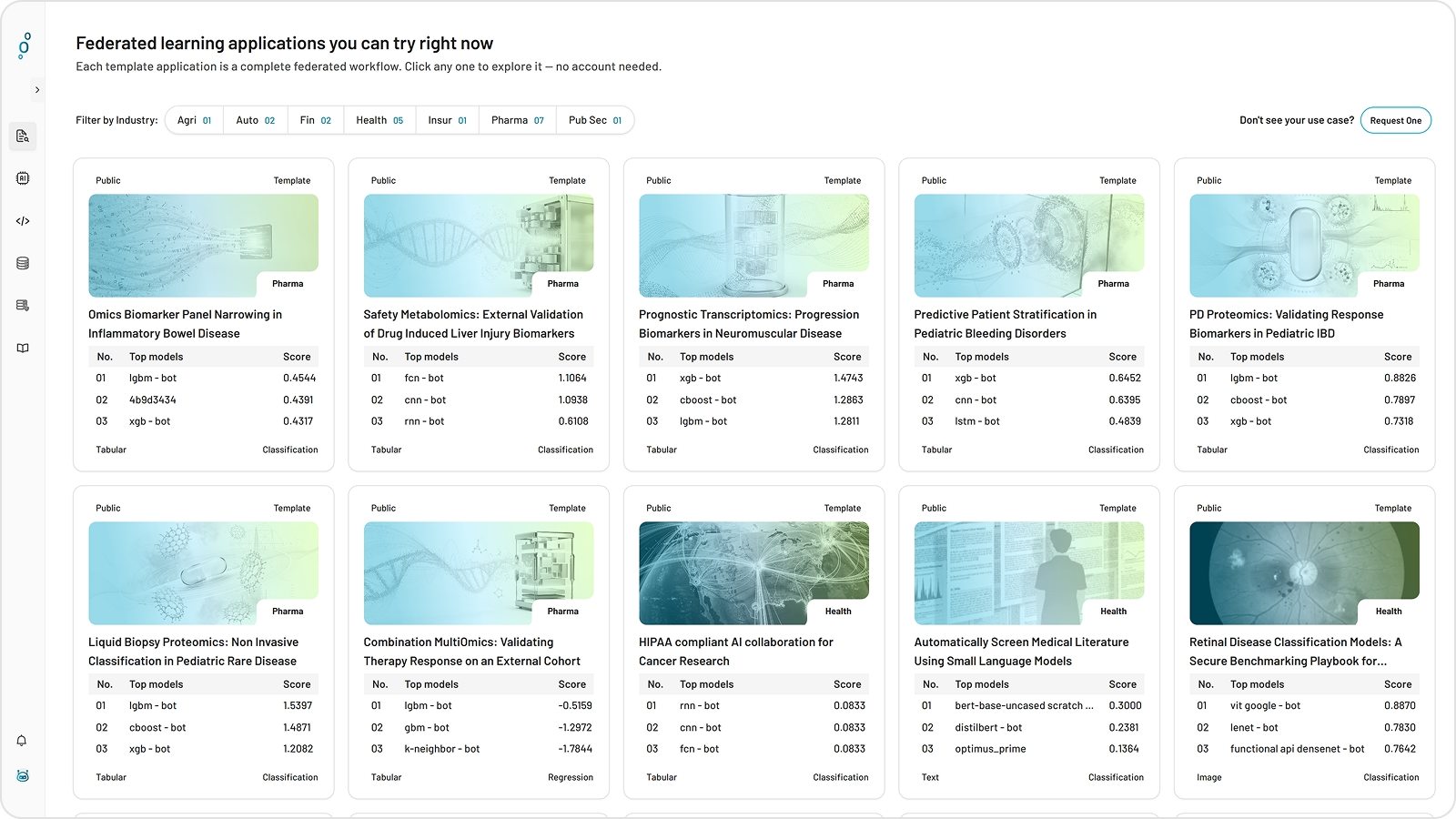

Federated Learning Applications Across Industries

Federated learning has traction wherever valuable data cannot move — and that describes most of the highest-value AI opportunities in the enterprise today. Healthcare records, financial transaction histories, industrial sensor logs, clinical trial data: the datasets that would produce the most accurate models are precisely the ones that cannot be centralised, shared, or handed to an external vendor. The result is a gap between AI's potential and what organisations can actually build. Federated learning closes that gap by bringing the model to the data rather than the other way around — enabling collaborative training and secure model evaluation without a single raw record leaving its source. The tracebloc platform makes this deployable across 19 ready-to-run use cases, spanning healthcare, pharma, finance, manufacturing, insurance, agriculture, and public sector.

Healthcare

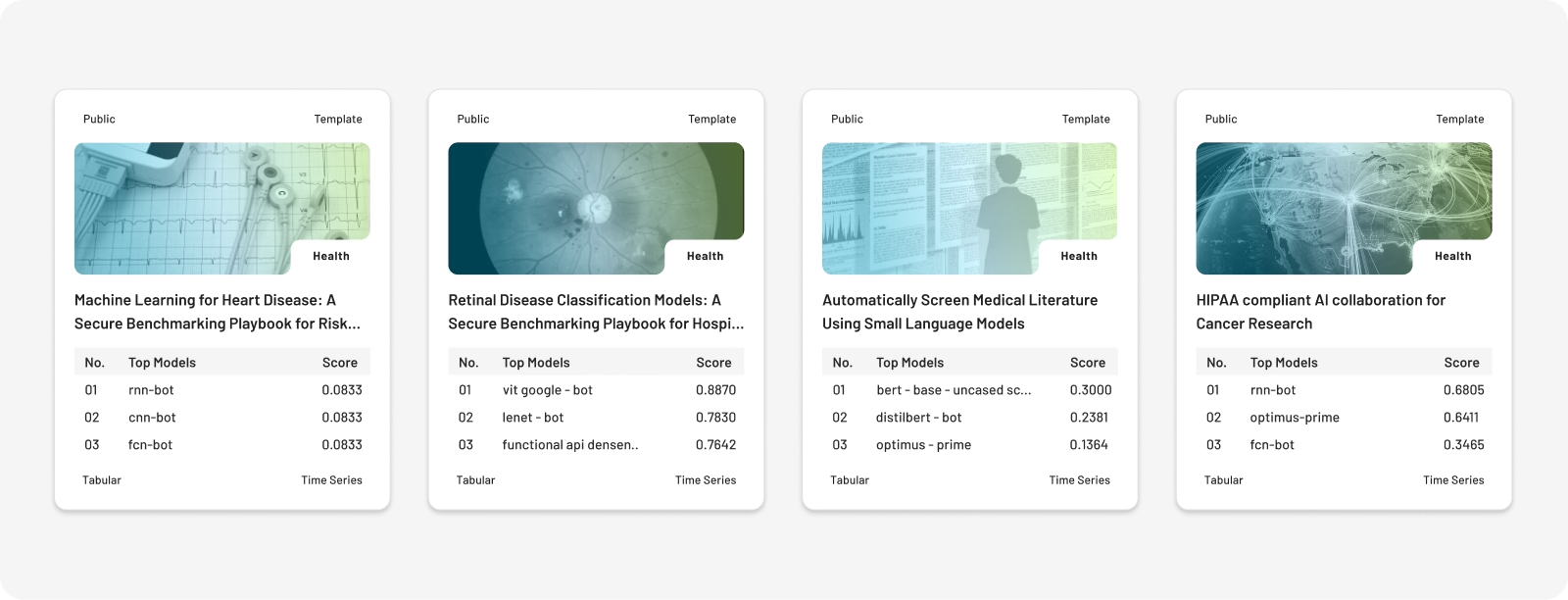

Healthcare is the most active frontier for federated learning applications. Hospitals and research centres hold vast volumes of patient data that cannot be centralised due to HIPAA, GDPR, and institutional governance requirements. Federated architectures allow organisations to train and evaluate models collaboratively- - from radiology and oncology to cardiology and clinical NLP — while patient records remain inside each institution.

- HIPAA Compliant AI Collaboration for Cancer Research Enables HIPAA-compliant cross-border AI collaboration to build models that help oncologists determine the most effective radiation dosage for individual prostate cancer patients based on their clinical profile.

- Automatically Screen Medical Literature Using Small Language Models: Securely benchmarks NLP and small language models for automated classification and triage of biomedical literature abstracts across clinical specialties like cardiology, oncology, and neurology.

- Retinal Disease Classification Models: A Secure Benchmarking Playbook for Hospital AI: Provides a secure benchmarking framework for hospitals to evaluate and compare multiple retinal disease detection AI models on their own patient imaging data without exporting any PHI.

- Machine Learning for Heart Disease: A Secure Benchmarking Playbook for Risk Prediction: Benchmarks machine learning models for heart disease risk prediction using structured EHR data (ECG, cholesterol, exercise response) within a secure evaluation workflow that protects patient records.

- AI-Powered Breast Cancer Screening and Image Classification for Radiology: Benchmarks AI image classification models on private breast cancer screening data to help radiologists prioritize scans, reduce false negatives, and improve diagnostic quality.

checkout our healthcare usecases

Pharma

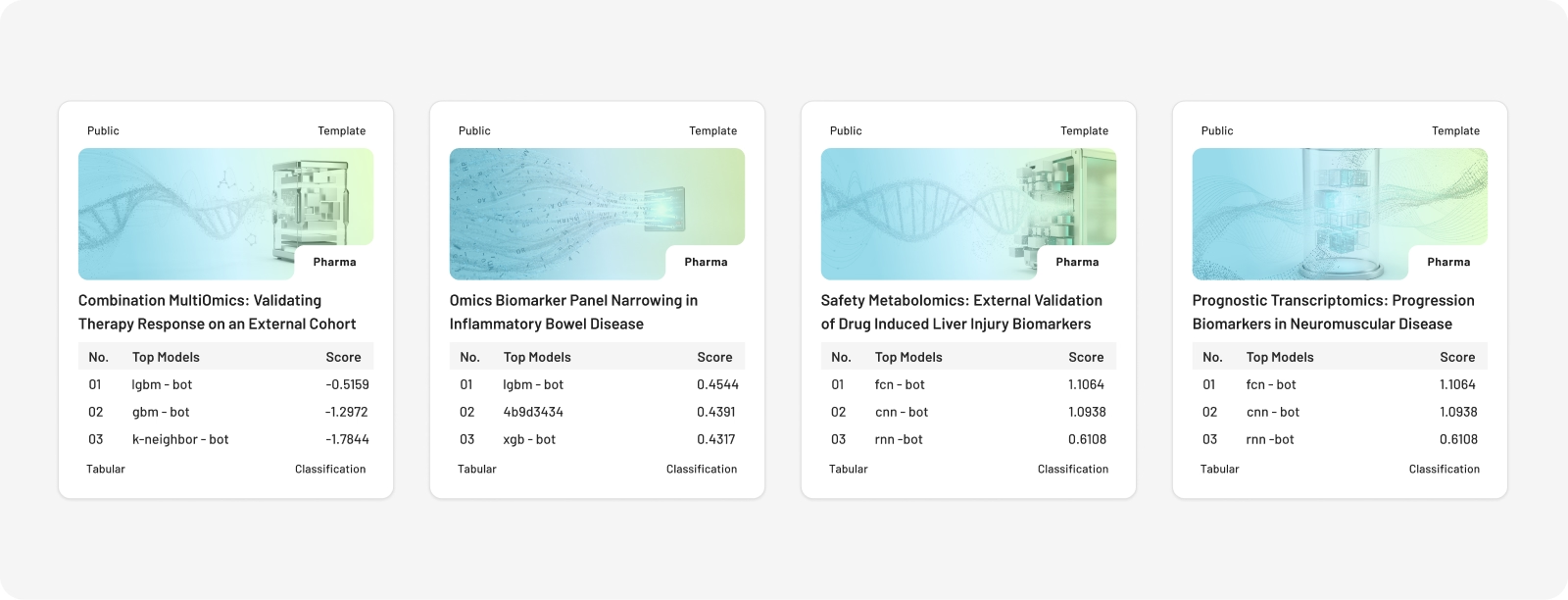

Pharmaceutical companies run some of the highest-stakes Al development in any industry — and the data they need to validate their models is held by clinical institutions that cannot share it. tracebloc's pharma templates address the two most common bottlenecks in Al-driven drug development: validating candidate biomarker panels against real patient data before committing to a Phase III trial, and confirming that prognostic models built on internal cohorts actually generalise to independent clinical populations.

- Omics Biomarker Panel Narrowing in Inflammatory Bowel Disease: Securely benchmarks candidate biomarker models against real pediatric longitudinal multi-omics data to validate which biomarker panels predict treatment response in IBD before committing to Phase III trials.

- Safety Metabolomics: External Validation of Drug Induced Liver Injury Biomarkers: Enables pharma companies to externally validate their drug-induced liver injury (DILI) safety biomarker models on independent clinical metabolomics data without the data leaving the institution.

- Prognostic Transcriptomics: Progression Biomarkers in Neuromuscular Disease: Validates prognostic transcriptomic biomarker models for predicting disease progression rates in children with neuromuscular disorders on an independent external clinical dataset.

- Predictive Patient Stratification in Pediatric Bleeding Disorders: Benchmarks multi-omics patient stratification models to classify pediatric bleeding disorder patients (hemophilia, von Willebrand) into clinically meaningful subgroups before gene therapy trial enrollment.

- PD Proteomics: Validating Response Biomarkers in Pediatric IBD: Externally validates pharmacodynamic proteomic biomarker models that predict early anti-TNF treatment response in pediatric IBD on an independent longitudinal cohort

- Liquid Biopsy Proteomics: Non Invasive Classification in Pediatric Rare Disease: Evaluates whether blood-based proteomic profiling can classify pediatric rare diseases non-invasively as an alternative to painful tissue biopsies and other invasive diagnostic procedures.

- Combination MultiOmics: Validating Therapy Response on an External Cohort: Validates multi-omics response prediction models for combination targeted therapies in pediatric oncology by benchmarking them against an independent external clinical dataset.

Finance

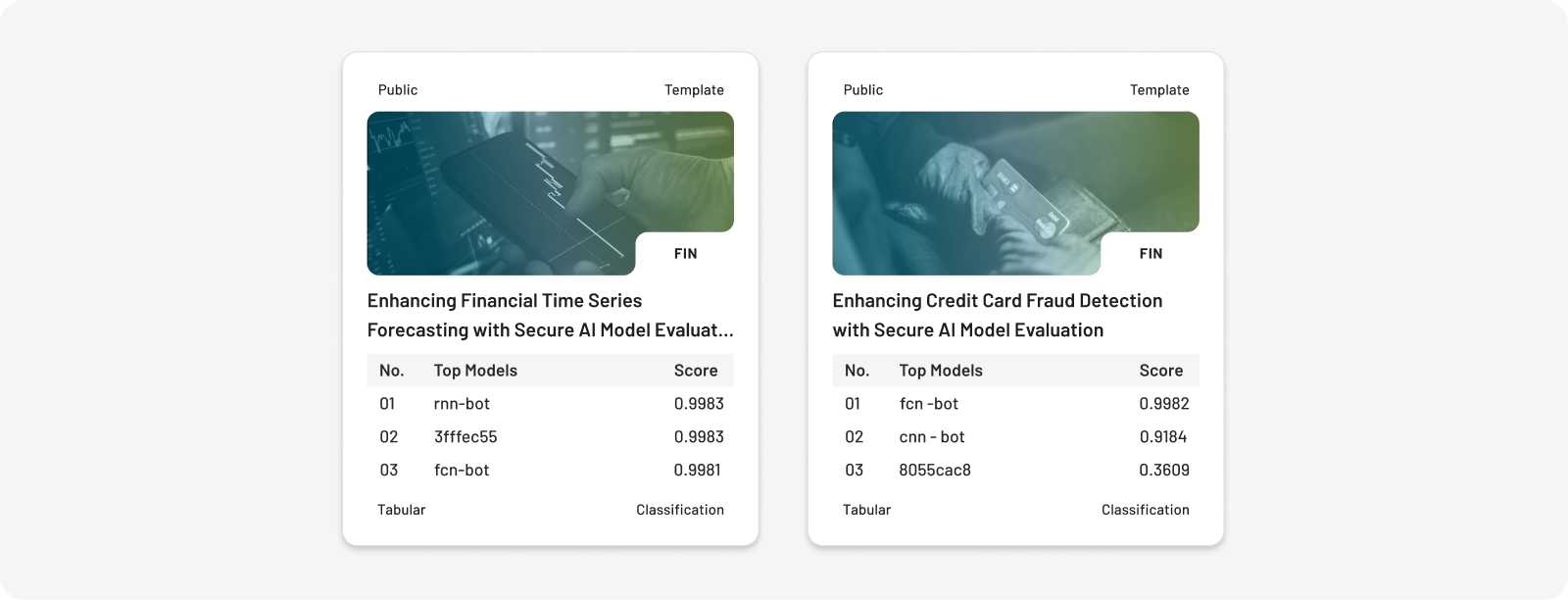

Financial institutions need to evaluate external AI models against their own proprietary trading and transaction data. The challenge: that data cannot leave the institution's infrastructure, yet generic benchmark results tell you nothing about how a model will actually perform on your specific dataset. Federated evaluation solves this by bringing vendor models to the bank's data, not the other way around — with no data or IP leaving, and up to 100 models benchmarked in parallel.

- Enhancing Financial Time Series Forecasting with Secure AI Model Evaluation: Enables trading desks to securely benchmark external AI forecasting models from quant firms and research labs on proprietary financial data without any data or IP leaving their environment.

- Enhancing Credit Card Fraud Detection with Secure AI Model Evaluation: Benchmarks AI fraud detection models on private credit card transaction data to identify which tabular classification model truly performs best at reducing missed fraud and false positives.

Automotive

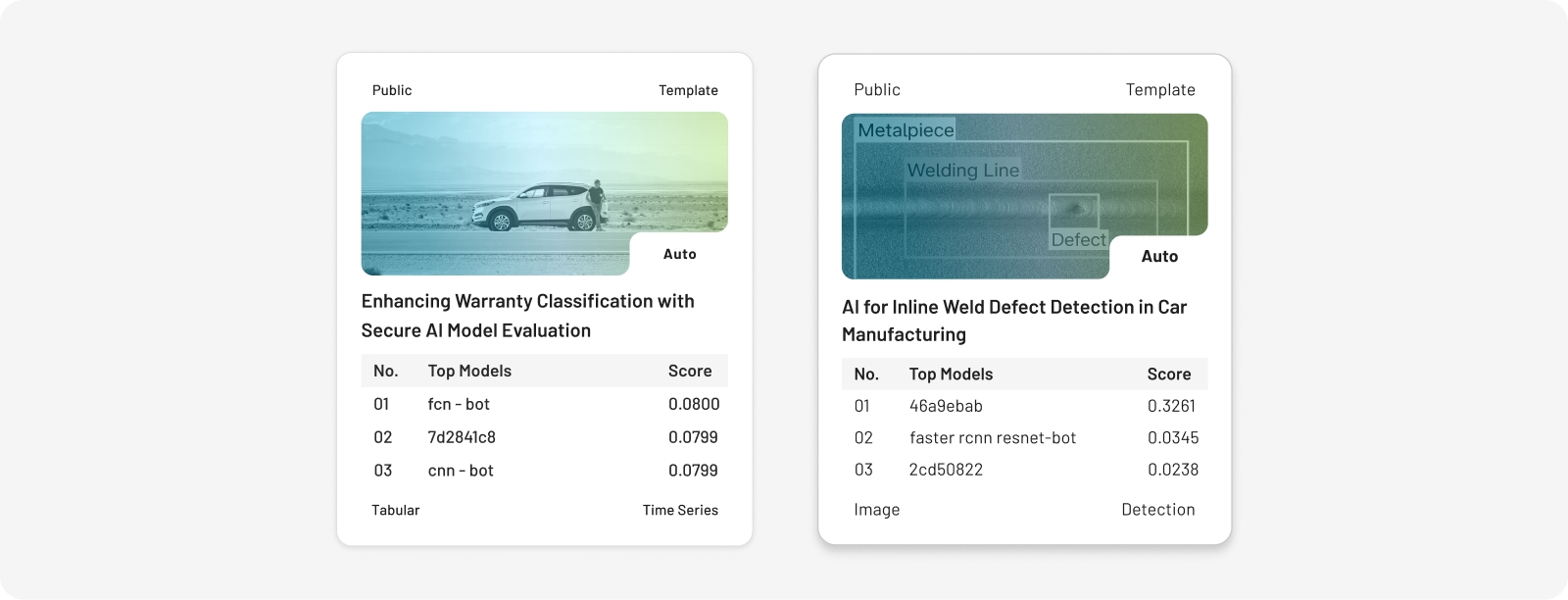

Industrial environments generate large volumes of operationally sensitive data — weld imagery, sensor logs, claims records — that cannot be shared across competitors or uploaded to external platforms. AI vendors routinely claim strong performance on public benchmarks that do not reflect real production conditions. Federated evaluation lets manufacturers benchmark models against their own data without exposing it.

The two tracebloc manufacturing templates below tackle the highest-cost quality problems in automotive production: detecting weld defects in real time at line speed, and classifying warranty claims to surface hidden manufacturing failures before they escalate.

- Enhancing Warranty Classification with Secure AI Model Evaluation: Benchmarks AI models for classifying automotive warranty claims into root cause categories on private OEM data to improve detection of rare but critical hidden manufacturing issues

- AI-Powered Weld Inspection System and NDT Testing in Automotive Manufacturing: Benchmarks AI object detection models for automating weld quality inspection and non-destructive testing (NDT) in EV manufacturing to detect subtle defects like micro cracks and porosity in real time.

Explore tracebloc’s practical FL applications in manufacturing

Insurance

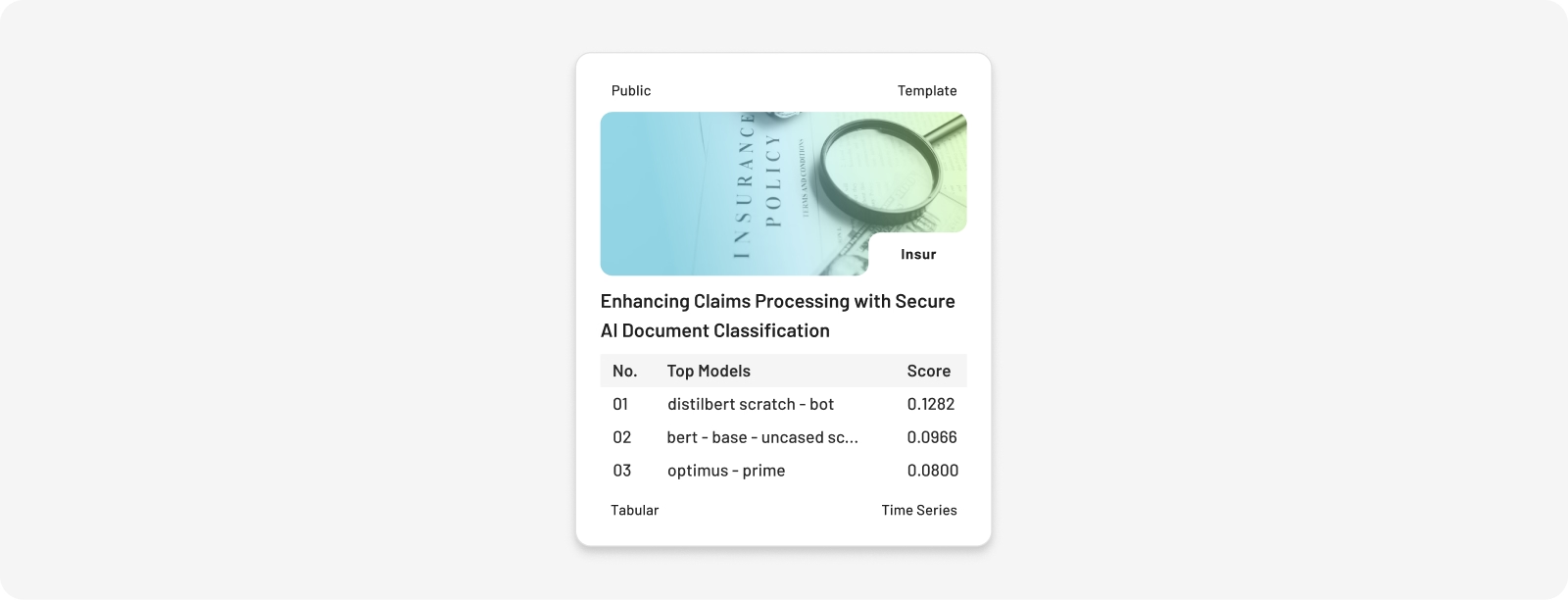

Insurance companies process thousands of incoming claims as PDFs, emails, and scanned forms — each containing multiple heterogeneous documents that must be sorted, classified, and prioritised. NLP models that perform well on public benchmarks frequently underperform on an insurer's own proprietary document corpus. Federated evaluation allows insurers to identify the best-performing model on their real claims data without any vendor accessing it.

- Enhancing Claims Processing with Secure AI Document Classification: Benchmarks NLP models for automated classification of heterogeneous insurance claims documents (PDFs, emails, scanned forms) to streamline triage and reduce manual processing workload.

Explore tracebloc’s hands-on AI case studies for insurance

Agriculture

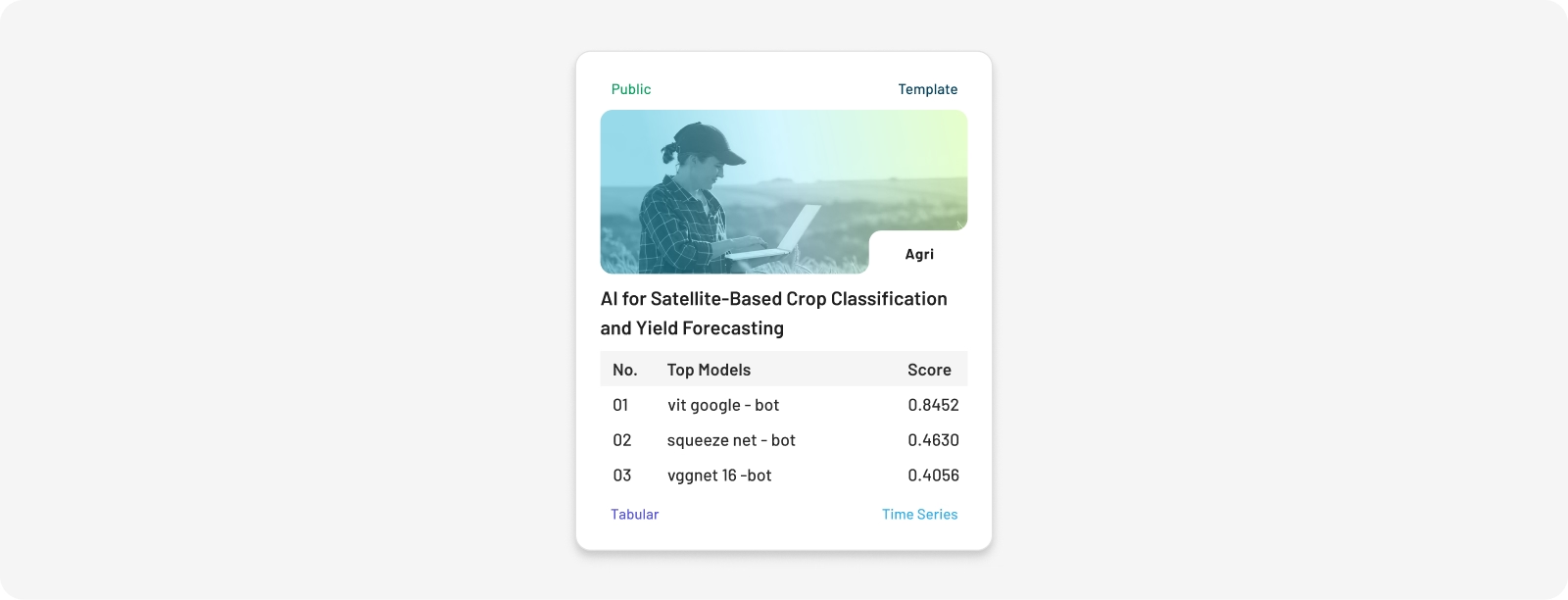

Agricultural monitoring businesses and farming cooperatives collect satellite imagery and environmental sensor data that is commercially sensitive and geographically specific. A model that generalises well across soil types, climate zones, and crop varieties requires evaluation on genuinely diverse real-world data — not public benchmark tiles. Federated evaluation lets agritech vendors test their models against operational field data without the data leaving the company's infrastructure.

- AI for Satellite-Based Crop Classification and Yield Forecasting: Benchmarks satellite vision AI models for classifying crop types and optimizing yield forecasts on proprietary Sentinel-2 imagery across diverse soil and climate zones.

Explore tracebloc’s agriculture use case examples in action

Public Sector

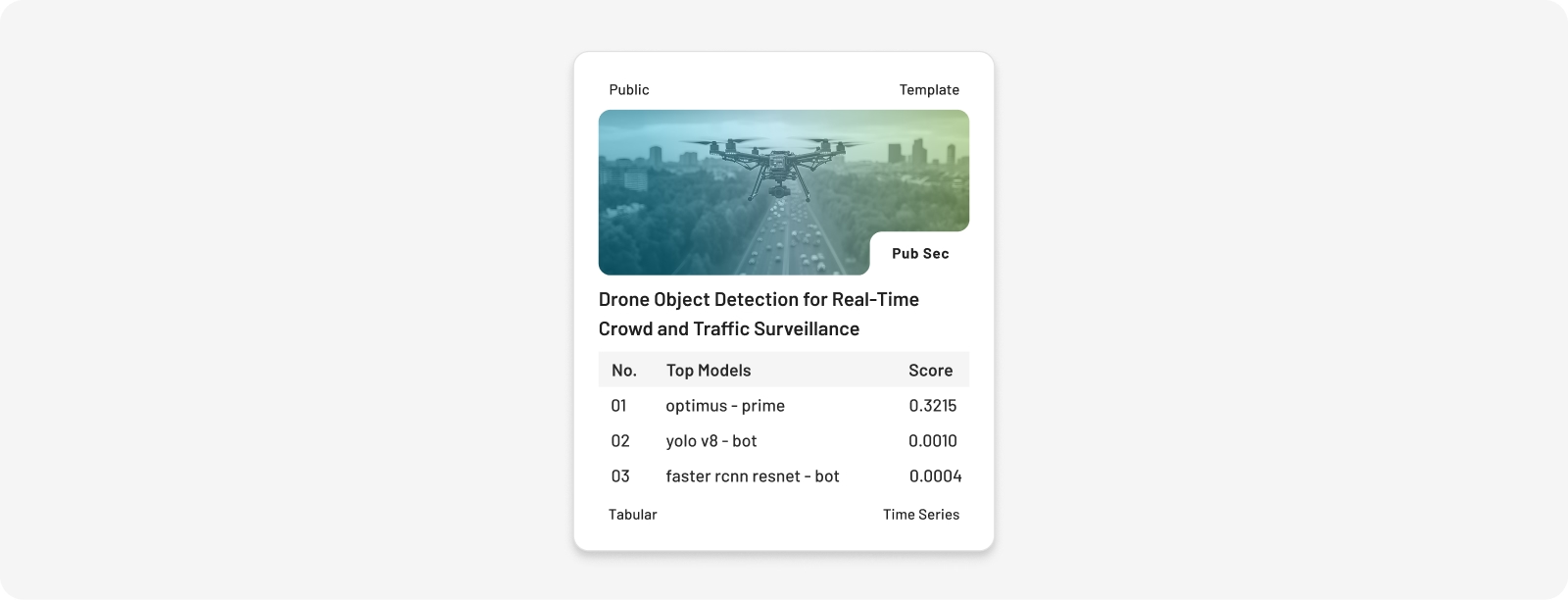

Government agencies and public safety organisations need AI systems that perform under real operational conditions — smoke, low light, high crowd density — but cannot share sensitive operational footage with external AI vendors for benchmarking. Federated evaluation enables city authorities and UAV analytics companies to identify the best-performing detection model on their actual aerial footage, without vendors ever accessing the raw data.

- Drone Object Detection for Real-Time Crowd and Traffic Surveillance: Benchmarks UAV computer vision models for real-time detection of people, vehicles, and emergency units in drone footage for traffic monitoring and crowd surveillance under challenging conditions.

Explore how tracebloc’s supports real public sector FL applications

Key Benefits of Federated Learning

Federated learning doesn't just solve a data privacy problem — it unlocks capabilities that centralised AI cannot offer. Here are the core advantages organisations gain, demonstrated across every platform example above.

-

Data stays where it belongs.

Raw data never leaves the device or organisation that owns it — from clinical cohorts to automotive weld datasets to proprietary trading histories. -

Compliance by design.

Because data doesn't cross borders or jurisdictions, GDPR, HIPAA, CCPA, and BaFin compliance become natural outcomes of the architecture rather than an afterthought. -

Access to data that was previously off-limits.

Proprietary trading data, clinical cohorts, and operational production datasets become accessible to AI development for the first time — without requiring data to move. -

Vendor claims verified on your own data.

The platform consistently exposes gaps between what vendors claim on public benchmarks and what they actually deliver on an organisation's real dataset — in crop classification, weld inspection, and claims processing alike. -

Better models through diversity.

Models fine-tuned on real, diverse operational data consistently outperform generic pretrained alternatives. The satellite crop classification results demonstrated this directly. -

Faster, cheaper vendor evaluation.

tracebloc delivers 10× faster evaluation at 70% lower cost compared to traditional procurement pipelines, by eliminating data logistics and compliance bottlenecks entirely. -

IP and data sovereignty for all participants.

No vendor or partner ever sees raw data. This makes federated evaluation viable in competitive industries where traditional evaluation would be legally or commercially impossible.

Challenges and Limitations of Federated Learning

Federated learning solves real problems — but introduces its own engineering and governance challenges. Understanding these is essential before committing to a federated architecture.

-

Communication overhead.

Exchanging model updates across many participants requires multiple rounds of communication. In large deployments, this overhead can become a bottleneck. Compression techniques and algorithms like FedAvg (McMahan et al., 2017) help, but require careful tuning per deployment. -

Non-IID data (statistical heterogeneity).

Data across participants is rarely identically distributed. Models trained on one geography, institution, or production environment can fail to generalise without exposure to diverse data from multiple sources. -

Inference and poisoning attacks.

Even without accessing raw data, adversaries can attempt to infer sensitive information from model updates or corrupt the global model through manipulated gradients. Defences include differential privacy and secure aggregation protocols. -

System heterogeneity.

Participants operating across different hardware, software frameworks, and network environments add real engineering complexity — particularly where edge inference constraints apply, as in the weld inspection use case. -

Data standardisation across participants.

Feature definitions, labeling conventions, and schema alignment must be agreed upon before any training can begin. This governance work is consistently the most underestimated phase of a federated project.

Why Most Federated Learning Initiatives Stall Before Deployment

Federated learning is technically proven. The reasons projects fail are rarely about the algorithm — they are about everything surrounding it. Standing up a federated initiative from scratch requires solving several hard problems simultaneously:

- Orchestration infrastructure must be built and maintained across all participant environments

- Client environments must be configured, tested, and kept in sync across organisations

- Data standardisation must be negotiated across participants before a single training round can run

- Governance frameworks — covering data access, model ownership, and update policies — must be agreed upon before training begins

Each of these is a project in its own right. Most organisations must tackle all four at once, without a blueprint, under time pressure from stakeholders expecting results. The outcome: most initiatives stall in the setup phase, teams get stuck in infrastructure, and the gap between proof of concept and production never closes.

How tracebloc Powers Federated Learning in Production

Most federated learning tools hand organisations a set of components and expect them to figure out the rest. tracebloc ships pre-built templates for real AI training and evaluation challenges — including every use case described in this article — so teams can configure and deploy without building from scratch.

- Pre-built templates for real AI challenges across healthcare, finance, manufacturing, insurance, agriculture, and public sector

- The client layer handles local training on private data, returning only weight updates to the orchestrator — raw data never moves

- A metadataset gives participants statistical visibility into the data landscape (size, class distribution, quality scores) without exposing any underlying records

- Training runs across all client sites in parallel, with weight updates merged into a single global model and real-time performance tracking

- Every evaluation is transparent, auditable, and production-grade from day one

What Problems Federated Learning Actually Solves

Most AI projects stall not because of algorithm limitations but because of data access problems. Federated learning addresses three persistent challenges that block AI deployment in regulated and competitive industries:

- Maintaining ownership of data local to each organization

- Enabling collaboration without exposing confidential records

- Improving model performance by learning from diverse datasets

- Allows models to visit data for evaluation and benchmarking

Frequently asked questions about AI vendor evaluation

Answers to common questions

Healthcare, finance, and manufacturing are the most active sectors. All three share the same profile: large volumes of sensitive data, strong regulatory requirements around data sharing, and a clear business incentive to build better AI without centralising data. The tracebloc platform operates across all six industries covered in this article.

Distributed learning splits a single large dataset across multiple machines to speed up training — all data is still owned by one organisation. Federated learning trains across data held by multiple independent organisations or devices, where data never leaves its owner. The key distinction is data ownership and privacy: federated learning is designed for scenarios where centralising data is not possible or not permitted.

FedAvg (Federated Averaging) is the foundational aggregation algorithm for federated learning, introduced by McMahan et al. at Google in 2017. Each participant trains a local model, then sends model weights — not data — to a central aggregator that averages them into a new global model. This process repeats over many rounds and is the basis for most production federated learning systems today.

The core guarantee is that raw data never leaves the participant's environment. Additional layers are typically added: differential privacy adds calibrated noise to model updates so individual records cannot be inferred; secure aggregation uses cryptographic protocols so the central server sees only the combined result, never individual participants' updates. Every tracebloc template operates on this principle — vendors receive only performance metrics, never data.

The five most commonly cited production challenges are: communication overhead from multiple update rounds; non-IID data making model convergence slower; inference and poisoning attacks targeting the update protocol; system heterogeneity across participant environments; and data standardisation requirements that must be resolved before training begins. Of these, data standardisation is most consistently underestimated by teams new to federated deployments.

Every use case in this article is available as a live, deployable template on the tracebloc platform. Visit ai.tracebloc.io/explore to browse all templates, review the underlying challenge descriptions, and request access to run evaluations on your own data.

References

McMahan, B. et al. (2017). Communication-efficient learning of deep networks from decentralized data. AISTATS 2017.

Pati, S. et al. (2022). Federated learning enables big data for rare cancer boundary detection. Nature Communications.

Ogier du Terrail, J. et al. (2023). Federated learning for predicting histological response to neoadjuvant chemotherapy. Nature Medicine.