What is federated learning? The tracebloc guide to privacy-preserving AI

Explore how privacy-preserving AI unlocks collaboration on sensitive data

Lukas Wuttke

Feb 11, 2026

9.5 min

Data is both an organization’s most valuable asset and its greatest liability. As artificial intelligence moves from "experimental" to "mission-critical," companies are hitting a wall: the data privacy paradox. To build high-performing AI, you need massive, diverse datasets. Consequently, accessing a vast amount of data is becoming increasingly important for the future success of any company.

When data is siloed to avoid risk, it becomes inaccessible for innovation. The problem is how to break these silos to source the high-quality, varied datasets required to train a model that actually performs in the real world.

The primary crisis for AI development is reaching relevant, diverse data necessary to build better models for your organization. When data is locked away in silos to stay safe, it becomes inaccessible for innovation. The problem we are addressing is how to break these silos to source high-quality, feature-diverse datasets required to train a model that actually performs in the real world—without ever moving the data from its secure location. This is why the industry is shifting toward a distributed paradigm. In this article, we will explore the mechanics, definitions, and advantages of distributed ML.

tracebloc's approach to federated learning

Understanding the basics

What is federated learning?

Federated Learning (FL) is a distributed approach to machine learning where data remains on the edge rather than being gathered in a central repository. Instead of the traditional method of moving data to a central server, the model is moved to the data. The core principle of this architecture is that sensitive information stays on the edge with the data owner, eliminating the need for data to be shared or exposed.

It is the architectural embodiment of the “model-to-data" philosophy. Instead of the traditional centralized model, where data is stored in one place, the model goes to the data.

Federated AI represents a move toward "privacy-preserving AI." It allows for the creation of global intelligence without the need for global data surveillance. It’s about building systems that respect sovereignty while benefiting from collective knowledge.

Federated learning versus traditional machine learning

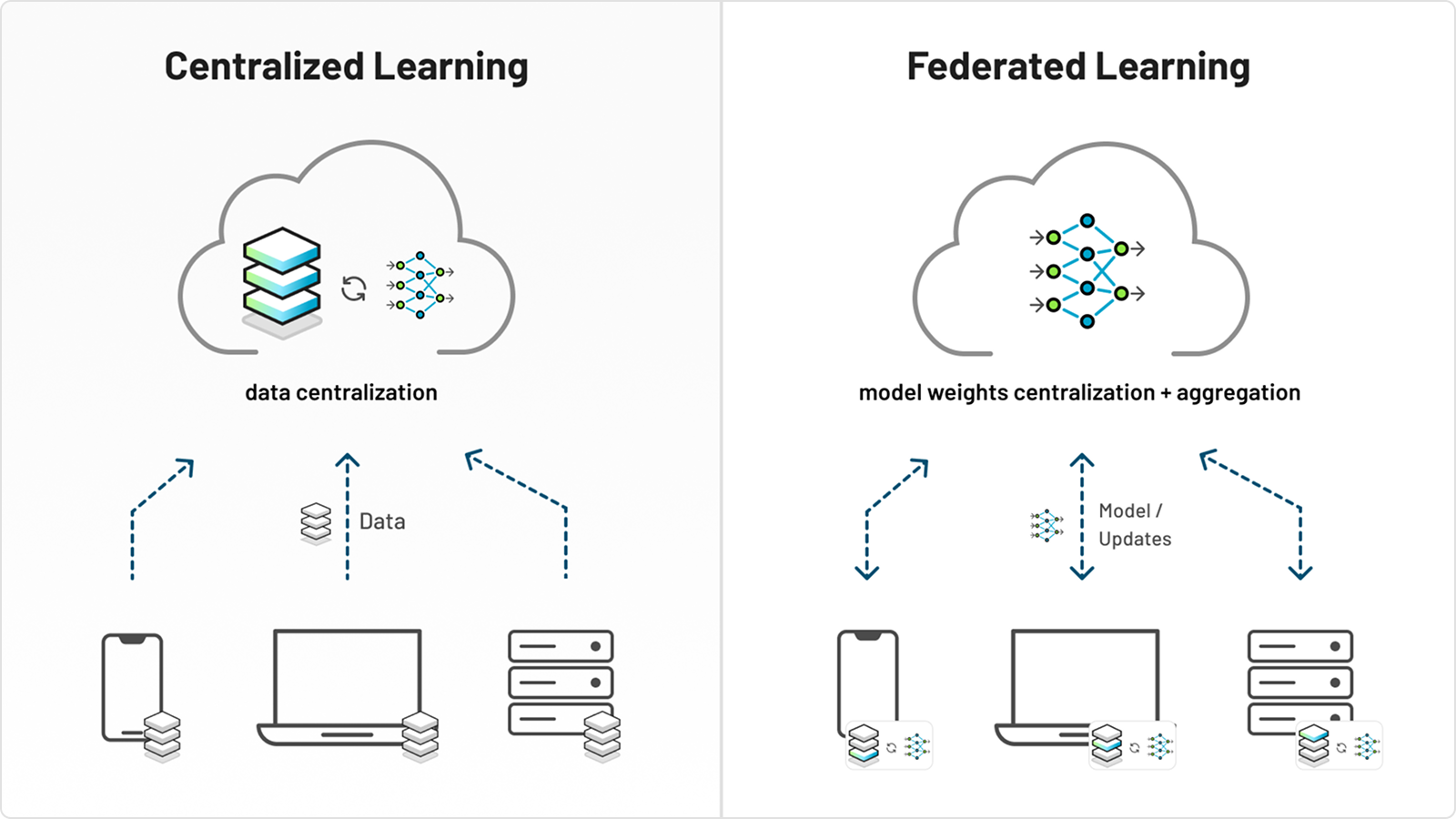

The main difference between federated learning and traditional, centralized machine learning is where the data resides during the training.

- Traditional machine learning (centralized). Data scientists collect data from various sources and store it in one place, such as a data center. The machine learning model is trained directly on this consolidated dataset.

This method can offer advantages like straightforward data access and simpler development. However, it may also create significant privacy risks if the central data repository is compromised and it only works if data can be centralized.

- Federated learning (distributed). The machine learning model is sent to the data, and participants train the model on it. Only the model updates (weight files) are then sent back to a central server for aggregation. This process allows models to learn from diverse datasets without accessing sensitive information from any single participant.

The difference between centralized learning and federated learning.

While centralized machine learning is well established and often easier to implement, federated learning is gaining traction. It can address data sovereignty concerns, reduce bandwidth requirements, and allow for model training on data that may be inaccessible.

What is federated learning data?

Unlike a static database, federated data stays on edge devices, local servers, or within private clouds. This data is often "siloed," meaning it cannot be easily combined due to legal, technical, or competitive reasons. Federated learning treats these silos not as obstacles, but as secure training grounds.

How federated learning works

To understand how federated learning works, imagine a cycle of constant improvement. This process never needs the transfer of raw, private data.

1. The global model initialization

The process begins with a centralized model, a baseline algorithm hosted on a secure server. This model might use pre-training on public data or simply initialize with random weights.

2. Distribution to the edge

The central server identifies a set of participants (nodes). These could be edge devices like smartphones and IoT sensors, or "siloed" nodes like hospital databases. The server sends the current version of the model weights and training plan to these nodes.

3. Training locally

This is where the magic happens. Each participant trains the model using its own data locally. Whether it is financial transaction logs or sensitive patient data, the raw information stays behind the organization’s firewall, always within the premises of the organization. The local hardware does the heavy lifting, using its own data to fine-tune the model's parameters.

4. Sending updates, not data

Once the node has trained a model on its local slice of the overarching dataset, it doesn't send the model back. Instead, it sends a "model update" or a summary of what it learned (mathematical gradients). These updates are typically encrypted and 'masked' to significantly reduce the risk of reconstructing raw data.

5. Aggregation

The central server receives updates from hundreds or thousands of nodes. It averages these updates to create a new, improved version of the global model. This new model now possesses the "wisdom of the crowd" without ever having seen a single individual record.

6. The iterative loop

This training process repeats until the model reaches the desired accuracy. Federated learning enables a level of scale that was previously impossible due to bandwidth and privacy constraints.

Why organizations need federated AI

For years, the gold standard for AI was the centralized model. You would build a massive data lake, hire a team of data scientists, and let them work.

However, the centralized model is failing for several reasons. While it remains a viable approach when data can be easily gathered, such as the public internet data used to train models like ChatGPT, it is a non-starter for the enterprise.

Industry and government data are often held under strict privacy constraints and siloing, making it virtually impossible to train high-performing models using traditional methods.

This is the exact deadlock that federated AI solves: it bypasses the need for centralization entirely, unlocking the ability to train on sensitive, diverse data that was previously out of reach.

1. Compliance and regulation

With the rise of GDPR, HIPAA, CCPA, and AI-specific regulations, moving private data across borders is becoming a legal minefield. Federated learning allows companies to remain compliant by design. If the data remains within its jurisdiction, the compliance burden significantly reduces.

2. Unlocking high-stakes data

In any highly regulated sector, private data is both a critical resource and a significant responsibility. Whether it is sensitive financial records, proprietary industrial telemetry, or confidential citizen information, this data is often locked behind layers of protection. Using federated machine learning, organizations can collaboratively train models on these diverse datasets without ever moving or exposing the raw information.

This approach drastically improves model performance—such as increasing fraud detection accuracy or refining predictive maintenance—without compromising the integrity of the original records. By keeping data at the source, organizations can comply with the strictest privacy standards while finally accessing the "data goldmines" required for innovation.

3. Handling massive volumes at the source

Modern operations generate such vast amounts of data that moving it all to a centralized cloud is often too slow and prohibitively expensive. Think of a global network of high-resolution industrial sensors, thousands of connected edge devices, or decentralized satellite offices. These sources produce massive datasets in real-time.

Traditional architectures hit a "bandwidth wall" when trying to centralize this volume. Federated learning bypasses this bottleneck by keeping the data on the edge. Instead of wasting resources on massive data transfers, the training happens locally, and only the lightweight model updates are shared. This makes it possible to build AI that is both more accurate and significantly more cost-effective.

Federated learning uses the computing power on local devices. This can save millions in cloud storage and data transfer costs. Beyond these operational savings, this approach solves a critical strategic challenge: model evaluation.

In a centralized world, testing an external model on your proprietary data requires you to hand over your most valuable asset. Federated AI flips this script. It allows an organization to "bring the model to the test," evaluating incoming algorithms directly on their own private datasets. This gives leaders a transparent, data-driven understanding of exactly which models perform best for their specific use cases before committing to a full-scale deployment or partnership. By testing in place, you can benchmark performance against real-world edge cases that never leave your secure environment.

Types of federated learning

Federated learning includes various approaches designed for specific challenges in distributed machine learning. While the core principle of keeping data decentralized remains the same, the implementation varies. Here are the four main types:

-

Centralized federated learning.

This is the most common approach. A central server coordinates the entire process: it sends the global model to clients, receives their local updates, and aggregates them. This is ideal when a trusted entity—like a tech company or a healthcare consortium—manages the network. -

Decentralized federated learning.

This removes the central server entirely. Clients communicate directly through a peer-to-peer network, acting as both learners and aggregators. The global model emerges from collective interactions. This is best for scenarios where no single authority exists or where maximum resilience is needed. -

Heterogeneous federated learning

Not every device has the same power. This approach accommodates devices with different computational resources and data quality. It uses adaptive algorithms to ensure that a smartphone, an IoT sensor, and a high-end server can all contribute to the same model effectively. -

Cross-silo federated learning.

This focuses on collaboration between a small number of reliable organizations (silos) rather than millions of individual devices. It is common in inter-bank fraud detection or collaborative medical research, where participants have large datasets and stable connections but must navigate complex legal and confidentiality agreements.

Real-world applications of federated machine learning

High-stakes sectors best demonstrate the real value of federated learning:

Healthcare: protecting patient data

Perhaps the most noble applications of federated learning are in medicine. To train an AI to detect a rare cancer, you need thousands of scans. No single hospital has enough.

By using federated learning, ten hospitals can train a model together. The AI learns disease patterns from a large dataset from around the world. However, patient data stays safe within each hospital's secure network

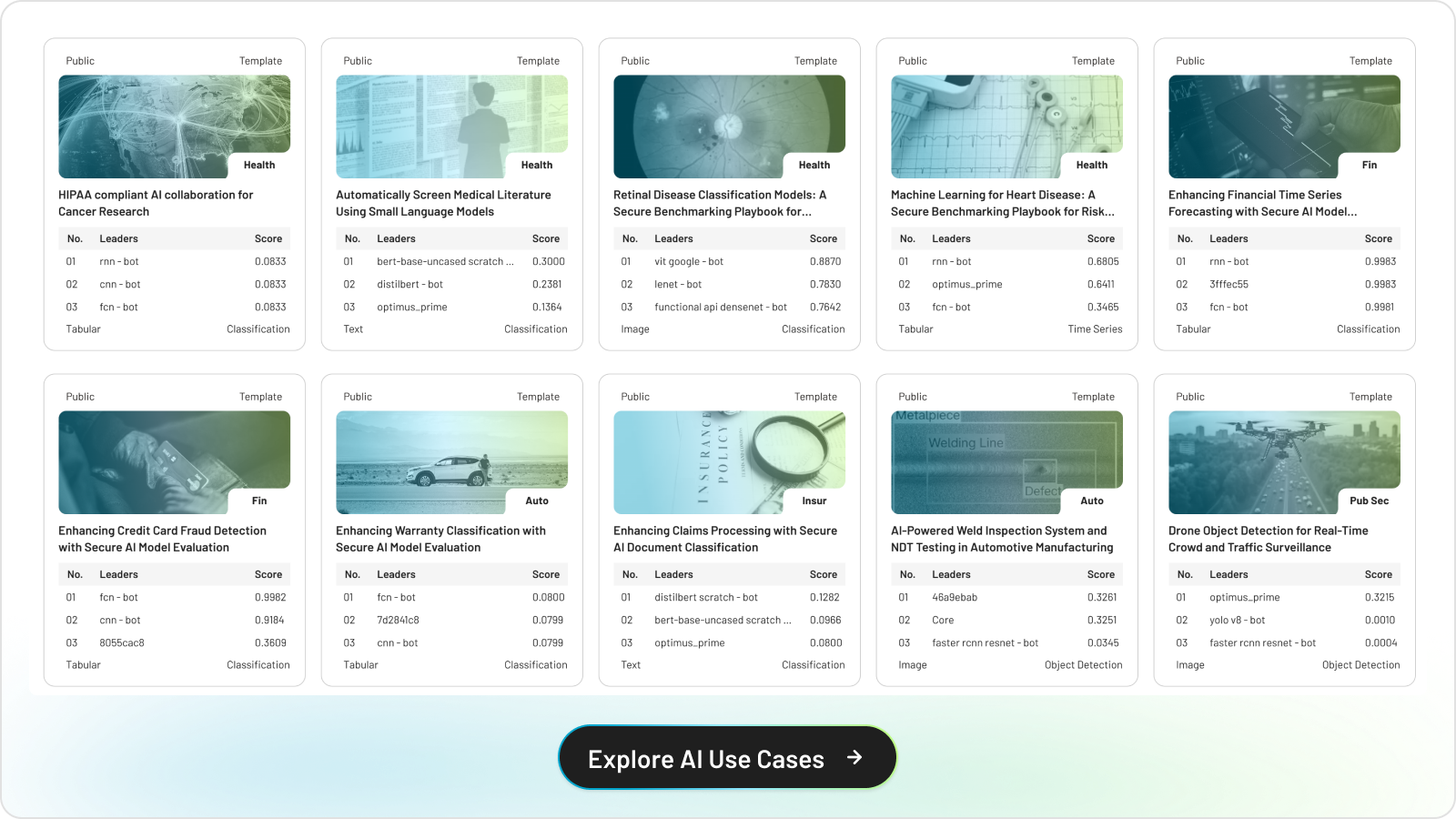

Explore tracebloc sample AI use cases

Finance: anti-money laundering

Fraudsters don't stay at one bank; they move between institutions. However, banks cannot share customer data due to privacy laws.

Federated learning enables banks to train a shared fraud-detection model. Each bank trains the model using its own transactions. The shared model learns to spot global fraud patterns. It keeps individual account details private.

Manufacturing: distributed quality control

An international corporation with fifty factories can use federated AI to improve quality control. Instead of sending video feeds from every assembly line to a central HQ, each plant trains the models locally. The plants share their "learnings" about defects, and the entire global network becomes more efficient.

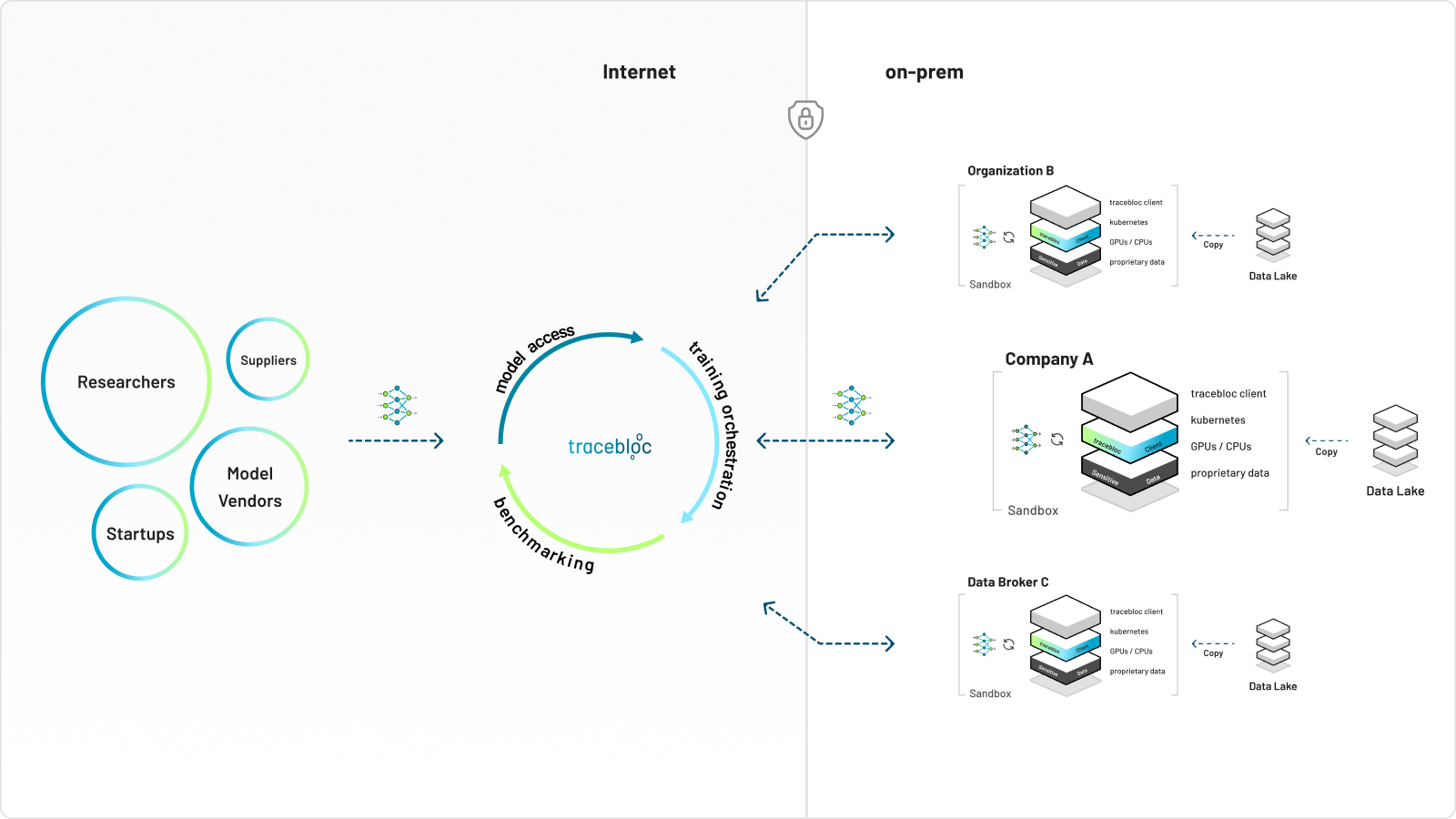

How tracebloc supports secure federated learning

While the theory of how federated learning works is sound, the implementation is incredibly complex. It’s one thing to run a pilot; it’s another to manage thousands of models and inferences simultaneously across a global network.

The true challenge lies in the "messiness" of the real world. Unlike a uniform cloud environment, edge data lives on a chaotic mix of hardware. You are often dealing with different operating systems, fluctuating RAM, and a variety of chip architectures: from standard Intel processors to specialized ARM chips. Manually optimizing model pipelines to run smoothly across this fragmented landscape is a massive logistical drain.

tracebloc essentially acts as the "invisible layer" that handles this complexity. We abstract away the heavy lifting of infrastructure, hardware compatibility, and resource scaling. By managing all this, we allow your data scientists to get back to their most valuable goal: building amazing models that solve real problems for your organization.

Unlocking high-accuracy innovation with federated learning

We recognized that the biggest hurdle in AI isn't just privacy: it’s access to relevant data. Research institutes and hospitals have high-quality data. However, the best AI talent and models are often found elsewhere.

tracebloc acts as a secure bridge. It lets data scientists access global expertise without risking data exposure. By enabling large-scale AI training and evaluation inside your own secure environment, you can:

-

Invite external innovation:

Grant top-tier AI vendors access to your "secure sandbox" to train or evaluate their models on real sensitive data. -

Compare and benchmark:

Run head-to-head evaluations to see which third-party algorithm performs best on your specific patient demographics without ever giving them a copy of your dataset. -

Maintain full sovereignty:

Models undergo automated security checks and run as isolated artifacts. Because the execution happens within your controlled infrastructure, you retain 100% control over the data and the audit logs.

The new standard: leveraging your data in a secure way

The old way of doing AI was a burden. Organizations had to acquire, host, and maintain massive AI models themselves. tracebloc introduces a new standard where you focus on the outcomes rather than the infrastructure.

For your data science teams, this means:

-

Intelligence over maintenance:

You don't need to own or manage the underlying AI models. You can easily access the intelligence provided by the tracebloc secure bridge. It offers precise predictions and accurate insights. -

Collaboratively learn without complexity:

Multiple institutions can collaboratively learn from one another’s findings. Access global insights and reach precise, accurate conclusions without the technical headache of managing dozens of different vendors. -

On-demand validation:

Instead of committing to one specific model, you use tracebloc to benchmark many. You use the "intelligence" of the best performer for your data. This ensures your AI strategy is always supported by the most accurate tools.

Take the next step with tracebloc

Talk to our team to apply your use case. Better outcomes start with AI that works on real-world data: unlocking intelligence from assets that are too sensitive, too regulated, or simply too massive to ever be moved.